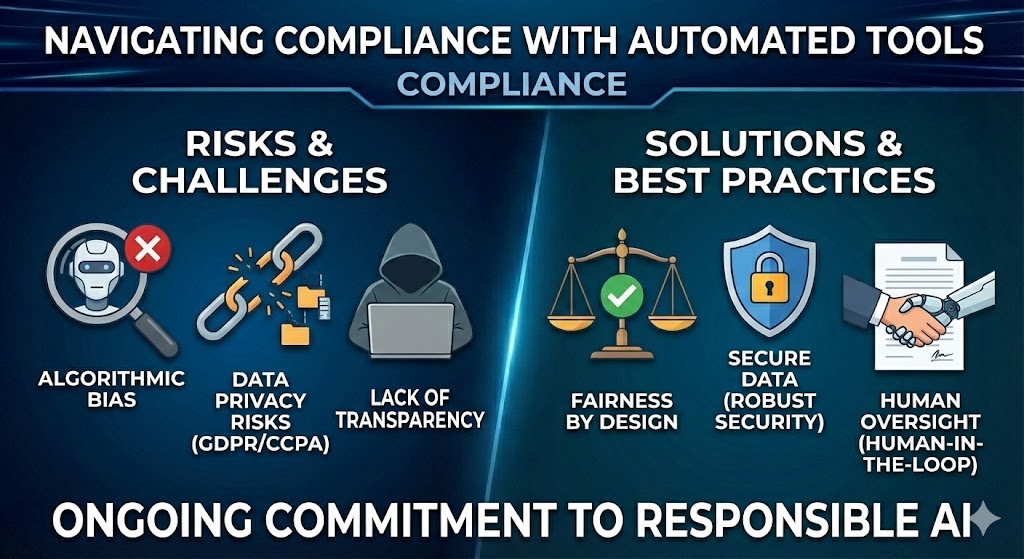

The rise of AI in recruitment brings incredible opportunities but also significant responsibilities. As automation becomes standard, ensuring your tools and processes comply with employment laws and regulations is paramount.

Understanding Algorithmic Bias

One of the biggest concerns with AI is bias. If an algorithm is trained on historical data that contains bias, it can replicate those patterns. It's crucial to use tools that are designed with "fairness by design" principles and to regularly audit your AI's decision-making process.

Data Privacy and Security

Handling candidate data comes with strict obligations under laws like GDPR and CCPA. Automated tools must have robust security measures in place to protect sensitive personal Information (PII). Ensure your vendors are compliant and transparent about how they handle data.

Transparency and Disclosure

Some jurisdictions, like New York City, now require employers to disclose the use of automated employment decision tools (AEDT) to candidates. Being transparent not only ensures compliance but also builds trust with your applicants.

Human Oversight is Non-Negotiable

Automation should support human decision-making, not replace it entirely. "Human-in-the-loop" systems ensure that critical decisions, especially those involving rejections, are reviewed by a qualified person, adding an extra layer of fairness and accountability.

Compliance isn't a one-time checkbox; it's an ongoing commitment. By staying informed and choosing the right partners, you can harness the power of AI safely and responsibly.